Johnson-Lindenstrauss绑定为带有随机投影的嵌入简介

Johnson-Lindenstrauss引理(简称JL引理)指出,任何高维数据集都可以随机投影到低维欧氏空间中,同时控制点的两两距离的失真度。也就说将点从高维空间映射到低维空间之后,新旧空间点的距离是可以近似相等的。

理论界限

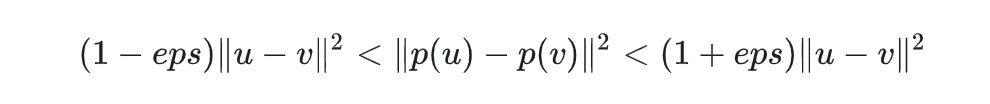

随机投影引起的失真度p,如下式所示:

其中u和v是从大小为[n_samples,n_features]的数据集中获取的任意行,而p是形状为[n_components,n_features](或稀疏Achlioptas矩阵)的随机高斯N(0,1)矩阵的投影。

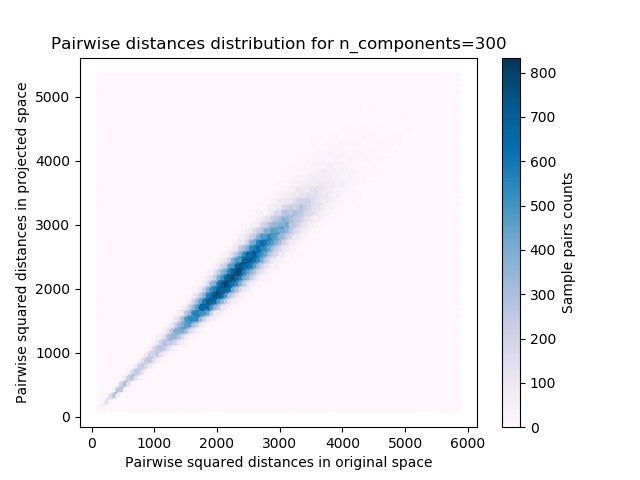

保证eps-embedding的最小组件数量为:

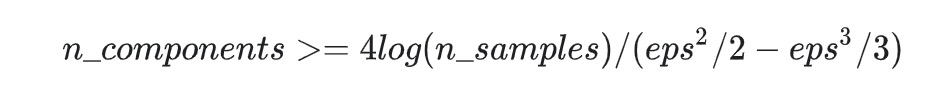

注:见后文代码执行的图,

- 第一个图显示随着样本数量n_samples的增加,最小尺寸

n_components对数增加以保证eps-embedding。 - 第二个图显示了对于给定数量的样本

n_samples,容许失真的增加eps可以大幅减少最小维度n_components

实证验证

我们在手写数字数据集或20个新闻组文本文档(TF-IDF词频)数据集上验证上述界限:

- 对于手写数字数据集,将500张手写数字图片的一些8×8灰度像素数据随机投影到各种较大维度n_components的空间。

- 对于20个新闻组数据集,使用稀疏随机矩阵将总共500个具有10万个特征的文档投影到较小的欧几里得空间,并为目标维数n_components设置不同的值。

示例中默认数据集是数字数据集。要在二十个新闻组数据集上运行该示例,请将–twenty-newsgroups命令行参数传递给此脚本。

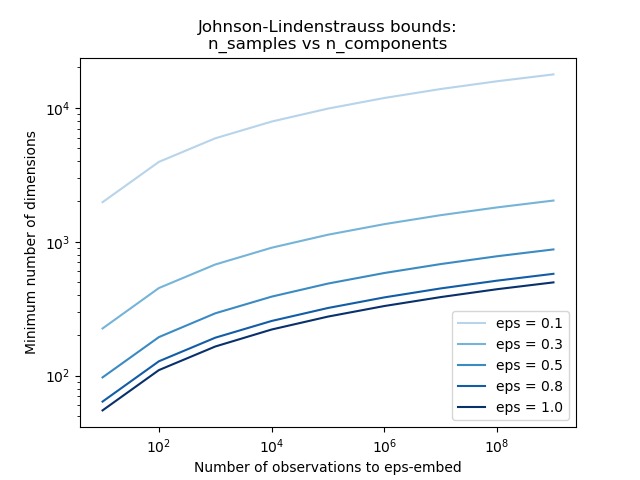

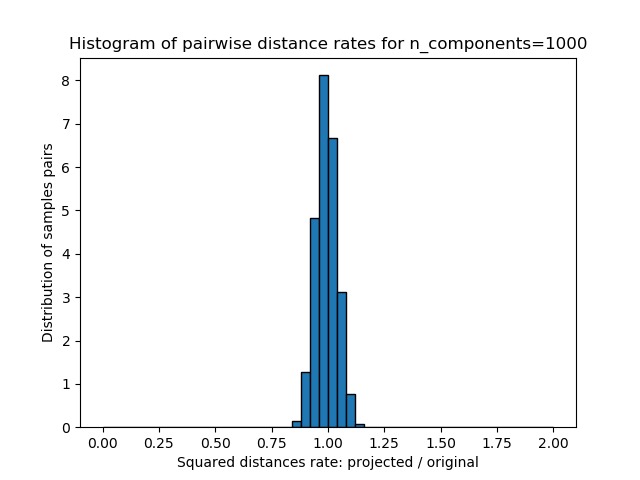

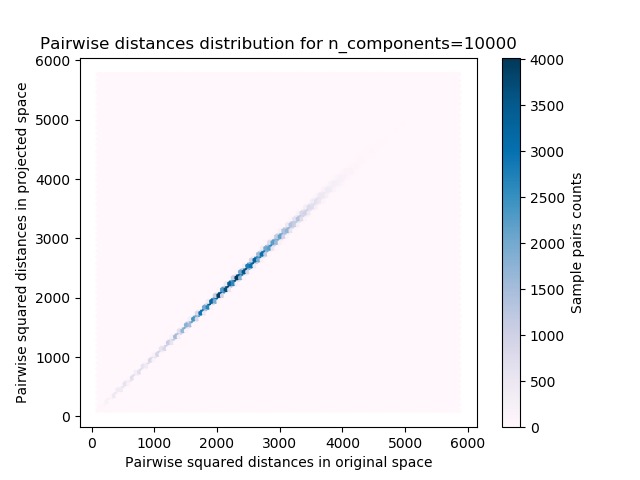

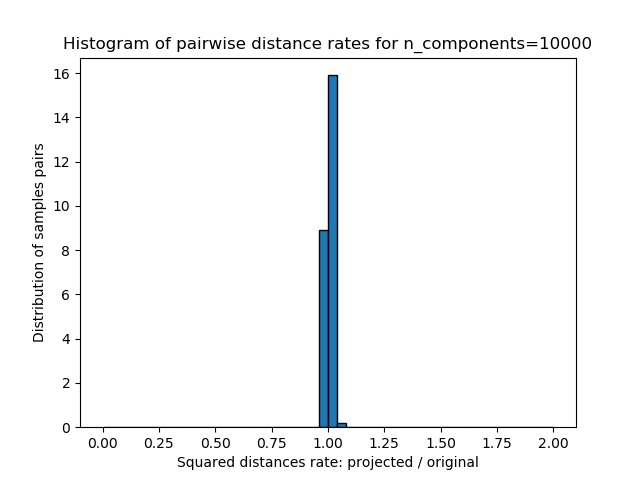

对于每个值n_components,我们绘制:

- 原始空间和投影空间中成对距离的样本对的2D分布(2D分别为x和y轴)。

- 这些距离的比例的一维直方图(投影/原始)。

我们可以看到,对于较小的n_components,分布较宽,有许多扭曲的对和偏斜的分布(由于左侧的零比率的硬性限制,因为距离始终为正值);而对于较大的n_components值,则可以控制失真,并且通过随机投影可以很好地保留距离。

备注

根据JL引理,无论原始数据集的特征数量如何,投影500个样本而不会产生太多失真都将至少需要数千个维度。

因此,对在输入空间中仅具有64个特征的数字数据集使用随机投影是没有意义的:在这种情况下,它不允许降维。所以在这个手写数字数据集上我们实验用的是增加维度。而另一方面,在二十个新闻组数据集中,维数可以从56436降低到10000,同时合理地保持点对的距离。

代码实现[Python]

# -*- coding: utf-8 -*-

print(__doc__)

import sys

from time import time

import numpy as np

import matplotlib

import matplotlib.pyplot as plt

from distutils.version import LooseVersion

from sklearn.random_projection import johnson_lindenstrauss_min_dim

from sklearn.random_projection import SparseRandomProjection

from sklearn.datasets import fetch_20newsgroups_vectorized

from sklearn.datasets import load_digits

from sklearn.metrics.pairwise import euclidean_distances

# `normed` is being deprecated in favor of `density` in histograms

if LooseVersion(matplotlib.__version__) >= '2.1':

density_param = {'density': True}

else:

density_param = {'normed': True}

# Part 1: 绘制n_components_min和n_samples之间的理论依赖性

# 容许失真的范围

eps_range = np.linspace(0.1, 0.99, 5)

colors = plt.cm.Blues(np.linspace(0.3, 1.0, len(eps_range)))

# range of number of samples (observation) to embed

n_samples_range = np.logspace(1, 9, 9)

plt.figure()

for eps, color in zip(eps_range, colors):

min_n_components = johnson_lindenstrauss_min_dim(n_samples_range, eps=eps)

plt.loglog(n_samples_range, min_n_components, color=color)

plt.legend(["eps = %0.1f" % eps for eps in eps_range], loc="lower right")

plt.xlabel("Number of observations to eps-embed")

plt.ylabel("Minimum number of dimensions")

plt.title("Johnson-Lindenstrauss bounds:\nn_samples vs n_components")

# 容许失真的范围

eps_range = np.linspace(0.01, 0.99, 100)

# range of number of samples (observation) to embed

n_samples_range = np.logspace(2, 6, 5)

colors = plt.cm.Blues(np.linspace(0.3, 1.0, len(n_samples_range)))

plt.figure()

for n_samples, color in zip(n_samples_range, colors):

min_n_components = johnson_lindenstrauss_min_dim(n_samples, eps=eps_range)

plt.semilogy(eps_range, min_n_components, color=color)

plt.legend(["n_samples = %d" % n for n in n_samples_range], loc="upper right")

plt.xlabel("Distortion eps")

plt.ylabel("Minimum number of dimensions")

plt.title("Johnson-Lindenstrauss bounds:\nn_components vs eps")

# Part 2: 对维数很低且密度高的某些数字图像或对维数高且稀疏的20个新闻组数据集执行稀疏随机投影

if '--twenty-newsgroups' in sys.argv:

# Need an internet connection hence not enabled by default

data = fetch_20newsgroups_vectorized().data[:500]

else:

data = load_digits().data[:500]

n_samples, n_features = data.shape

print("Embedding %d samples with dim %d using various random projections"

% (n_samples, n_features))

n_components_range = np.array([300, 1000, 10000])

dists = euclidean_distances(data, squared=True).ravel()

# 仅选择不相同的样本对

nonzero = dists != 0

dists = dists[nonzero]

for n_components in n_components_range:

t0 = time()

rp = SparseRandomProjection(n_components=n_components)

projected_data = rp.fit_transform(data)

print("Projected %d samples from %d to %d in %0.3fs"

% (n_samples, n_features, n_components, time() - t0))

if hasattr(rp, 'components_'):

n_bytes = rp.components_.data.nbytes

n_bytes += rp.components_.indices.nbytes

print("Random matrix with size: %0.3fMB" % (n_bytes / 1e6))

projected_dists = euclidean_distances(

projected_data, squared=True).ravel()[nonzero]

plt.figure()

plt.hexbin(dists, projected_dists, gridsize=100, cmap=plt.cm.PuBu)

plt.xlabel("Pairwise squared distances in original space")

plt.ylabel("Pairwise squared distances in projected space")

plt.title("Pairwise distances distribution for n_components=%d" %

n_components)

cb = plt.colorbar()

cb.set_label('Sample pairs counts')

rates = projected_dists / dists

print("Mean distances rate: %0.2f (%0.2f)"

% (np.mean(rates), np.std(rates)))

plt.figure()

plt.hist(rates, bins=50, range=(0., 2.), edgecolor='k', **density_param)

plt.xlabel("Squared distances rate: projected / original")

plt.ylabel("Distribution of samples pairs")

plt.title("Histogram of pairwise distance rates for n_components=%d" %

n_components)

# TODO: compute the expected value of eps and add them to the previous plot

# as vertical lines / region

plt.show()

代码执行

代码运行时间大约:0分1.837秒。

运行代码输出的文本内容如下:

Embedding 500 samples with dim 64 using various random projections Projected 500 samples from 64 to 300 in 0.016s Random matrix with size: 0.028MB Mean distances rate: 0.97 (0.08) Projected 500 samples from 64 to 1000 in 0.048s Random matrix with size: 0.096MB Mean distances rate: 0.99 (0.05) Projected 500 samples from 64 to 10000 in 0.594s Random matrix with size: 0.964MB Mean distances rate: 1.01 (0.01)

运行代码输出的图片内容如下:

源码下载

- Python版源码文件: plot_johnson_lindenstrauss_bound.py

- Jupyter Notebook版源码文件: plot_johnson_lindenstrauss_bound.ipynb